The term plastic is somewhat relative – it actually means any material that is moldable, shapable, ductile. At extremely high temperatures even rocks can become plastic. The most common use of the word is likely to describe a synthetic material made from a wide range of organic polymers. The first plastic made from synthetic materials was Bakelite, which was invented in 1907. It was used in the 1930s to make cameras such as the Kodak Baby Brownie, and Purma Special. Plastic materials such as methyl methacrylate, or acrylic (often better known by its trade names, e.g. Lucite, Plexiglas, Perspex), were developed in the 1920s, largely to make unbreakable eyeglasses.

There was little interest in the use of plastics as substitutes for optical glass until WW2. Many plastic materials were examined during the war period, but few were found to have the right optical characteristics for use in photographic lenses. After the war, research continued, and plastics replaced glass in a number of non-critical optical purposes. But in the realms of photography, few if any manufacturers gave up their dependence on glass, save perhaps for lenses in inexpensive box-cameras. In 1946 Andrew Hecht wrote an article on plastic lenses [1]. The first statement he made was “Plastic lenses are here, and they are here to stay…”. Hecht suggested they would only be economical in lenses of 2.5” or more in diameter. The article focuses on Thomas S. Curtis Laboratories, which produced thousands of lenses up to 18” in diameter for the US Army. These lenses were manufactured from large slabs produced in electric furnaces which is then cut, and shaped on lathes, ground and polished. The article seemed to focus on lenses for applications such as industrial magnifiers.

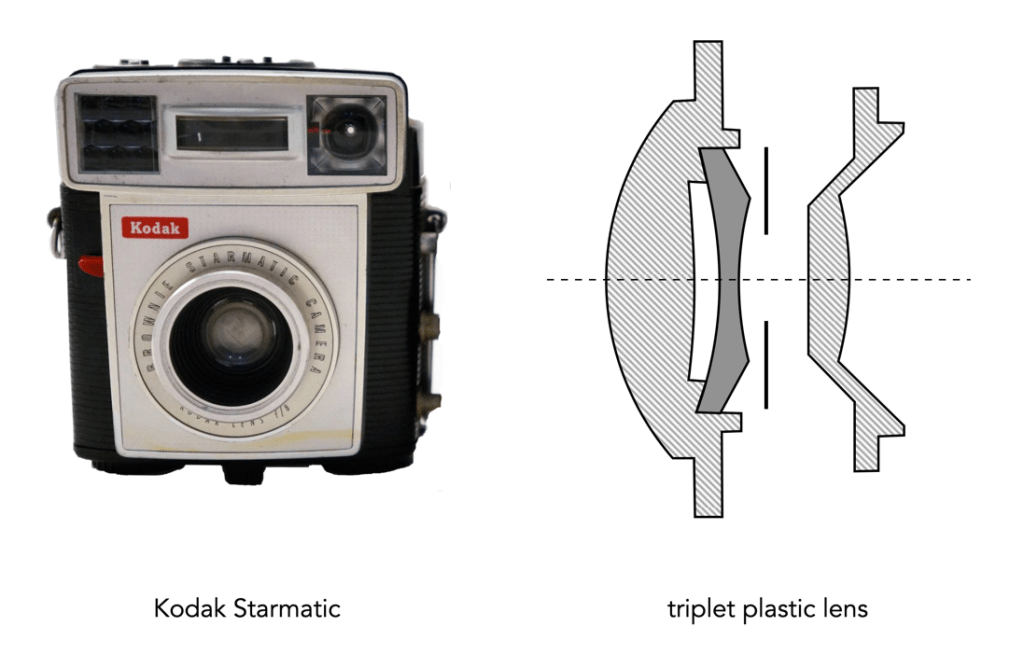

The 1950s saw a growing trend towards the idea of using plastics in cameras. In 1952 Kodak was experimenting with plastic viewfinders in its simple cameras, and by 1957 was making injection molded meniscus lenses for use in snapshot cameras. In 1959 it was using triplet lenses with an f/8 aperture in its Starmatic Brownie cameras. The March 1961 issue of Modern Plastics [2] had an article on plastic lenses, with a cover touting “Lenses – The Focus is on Plastics”. The article describes large plastic lenses made of acrylic, 4-30” in size, used in applications such as magnifiers and reflectors. The article described the many benefits of plastic lenses: reduced weight, more light transmission, impervious to thermal shock, and chip-proof. However of the varied applications it suggests the “prospects are not overly bright for injection molded methacrylate”, largely due to the refractive index. Doubts had already started to set in.

Lloyd Varden investigated plastic lenses in the August 1961 of Popular Photography [3]. He describes a long list of properties that glass had that made it superior to plastic: (i) range of refractive indexes, and dispersion values available, (ii) homogeneity, (iii) physical hardness, (iv) transparency, (v) selective absorption, i.e. absence of colour, (vi) light and atmospheric stability, (vii) freedom from excessive bubbles, (viii) thermal expansion, (ix) moisture absorption, (x) chemical reactivity and solubility, and economy in manufacturing. Unfortunately, plastics of the period could not match up to all these requirements. Plastics could have a high degree of transparency, a low selective absorption, and an absence of bubbles, but failed in other categories such as physical hardness, making them susceptible to scratches, or a high refractive index/low dispersive power.

In 1964 Leonard Lipton wrote Popular Photography article, again looking at plastic lenses: “Plastic Lenses: Good Enough!” [4], in which he said “we are already deep into the plastic lens revolution.” He estimated that in 1963 five million plastic lenses were manufactured, and good photographic objectives could be made up to f/8. He suggests that Kodak was reluctant to admit their inexpensive cameras contained plastic lenses, largely due to the perception that the public associated plastic with an inferior product. Kodak instead preferred to use the term “acrylic”. Many companies were at the time using plastic in products such as viewfinders, and slide viewers. Lipton’s article was a lengthly one, describing the virtues of plastic (over glass), how plastic has dealt with issues such as striation, and changes in temperature, the process of molding lenses, and their limitations.

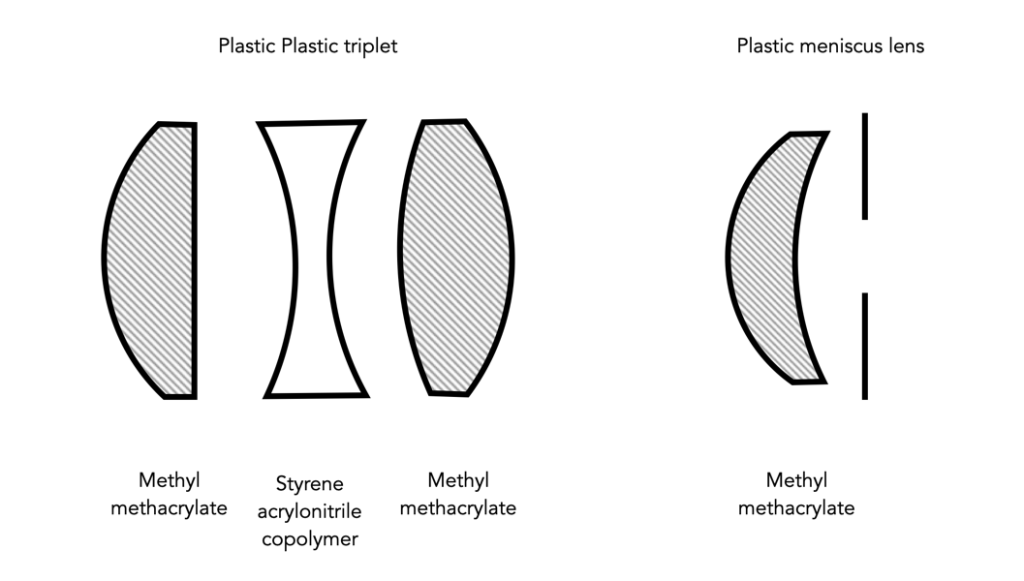

Plastic lenses are typically molded from polymers such as methyl methacrylate (MM), and styrene acrylonitrile copolymer (SAC). Optical glass is chemically nothing like optical grade plastic. Plastic has a definite molecular structure, whereas glass does not. Plastic is basically made from carbon, hydrogen and oxygen, whereas glass can contain a wide variety of materials, e.g. silicon dioxide, barium, boron, lead, and even thorium. The single biggest benefit of plastic is that it could be injection molded. Glass on the other hand, could not be injection molded as it would produce surface irregularities, which would then have to be ground and polished out (modern glass can be precision moulded). Injection molding allowed for complex shapes to be made easily, and inexpensively. Early plastic lenses suffered from something called “striation” whereby a lens has regions which with an index of refraction different from the rest of the lens, resulting in fuzzy pictures. It was caused by uneven cooling in the mold, but by the mid-60s this had been eliminated from lenses.

Plastics were said to suffer from defects, e.g. becoming pitted, or discoloured. However as they were usually used in simple, small lenses, this was hardly ever a real issue. Scratching (of the outer lens) was reduced through the use of plastics like Plexiglas V100, another acrylic which is very hard. The biggest issue with plastic lenses centred around the index of refraction (IR), which is a dimensionless number that indicates the light bending ability of a medium. The IR of plastics was (and is) rather low compared to optical glass. Acrylic has an IR of 1.49, and styrene acrylonitrile copolymer, 1.57. Compare this against modern glass of the period, at 1.52 to 1.89. Another problem was the fact that the IR of acrylics decreases as temperatures increases, changing the focus. Some plastic lenses were designed to automatically compensate for this. For example the plastic f/8 Cooke triplet, which used lens elements made from both acrylic and SAC. The focus of the acrylic elements (front and rear) increases, while the focus of the middle SAC lens decreases, balancing out any changes in focus.

Lipton went a long way to describe the manufacturing benefits of plastic (and the drawbacks of optical glass) [4]. Optical glass is made by melting raw materials, which is processed when it cools into glass. Optical glass requires a number of steps including grinding, polishing, and testing, which made them expensive to manufacture. Plastic lenses on the other hand were simple to manufacture:

“Plastic lenses are made in air conditioned pressurized rooms, and in the case of Plexiglas or Lucite, the plastic, in powder form, is fed to a machine where it is heated and softened. It may be heated to a temperature of 400 to 500 degrees Fahrenheit. The softened plastic is then forced, under a pressure of at least 16,000 pounds per square inch, into a mold when it remains until it cools enough to retain the mold’s shape. The mold is then opened, and the lens is popped out, ready to be used as is, or assembled with other elements with no necessity for working to a finished size.”

Not that manufacturing optical plastics didn’t have its limitations. It was challenging to mold large diameter optical lenses, lenses with plane surfaces, and those with thick centres and thin edges. Lipton considered two stumbling blocks prohibiting the creation of high-speed 35mm lenses: low refractive indices, and the inability to mold large diameter lenses. In fact Dr. Rudolf Kingslake, director of optical design for Kodak, said of plastic objectives: “It’s the low indicies of refraction that are stopping us, it’s just a matter of substituting plastic for glass.”[4].

In 1972 Bob Schwalberg wrote an article describing why glass still reigned supreme [5]. He suggested SLR pentaprisms were a good candidate for conversion to acrylic which would reduce production costs. Schwalberg outlines five benefits:

- Lower cost – Raw materials are cheaper, and less expensive to work.

- Complete form freedom – Aspherical (non-spherical curvature) lenses are expensive to make in glass.

- Exceptional clarity – Not all optical glass is perfectly colourless, the highest grades of optical plastics are quite colourless, and their clarity frequently superior.

- Light weight – Plastic lenses are lighter.

- High impact resistance – Glass is brittle, plastics are flexible.

and five counter-arguments:

- Too limited range of optical specifications – i.e. The refractive index, and the dispersion. Refractive indexes for optical plastics are close to 1.5, optical glass ranges from 1.42 to 1.95.

- Poor curve holdability – Accurate lens curvatures are critical for quality performance. Plastic lenses have poor curve conformity because of (3) below, and their inherent flexibility. Glass is stronger and more stable, it holds curvature much better in the face of external forces.

- High temperature coefficients – The expansion and contraction of optical plastics is much greater than for optical glass. Muti-element plastic lenses have been developed with elements possessing opposing temperature coefficients. Unthinkable for precision camera lenses.

- Clear plastics are hygroscopic – They absorb airborne moisture. Optical media must be isotropic, i.e. equal in all directions. The absorption of moisture destroys this homogeneity.

- Low abrasion resistance – Plastics are softer and more prone to scratching than optical glass.

Many of the cameras that use(d) plastic lenses are considered to be “toy” cameras. In 1959 Kodak introduced the Starmatic, the top of Kodak’s Brownie line. It had an 44mm f/8 three-element, plastic lens. The Lomography Diana appearing in the 1960s, and was made entirely of plastic (and in 1975 cost less than $2). The Polaroid Pronto Land camera (mid 1970s), also had a 116mm 3-element Polatriplet plastic lens. Most Holga cameras, had a 60mm f/8 plastic meniscus lens.

But the breakthroughs and sophisticated designs associated with plastic lenses never really materialized. In the end, low refractive indices, and the inability to successfully mold large diameter lenses may have been stumbling blocks to making 35mm lenses from plastic. There are some plastic optical materials [6] that have reached a refractive index of as high as 1.68, e.g. PolyEtherImide, but they often suffer from having a lower transmission rate (36-82% for PolyEtherImide, versus 92% for acrylic). Leica APO glass, on the other hand, has a refractive index of 1.9005.

Apart from their use in inexpensive cameras, there is another use of optical plastic, that is in hybrid aspherics. A hybrid aspherical element is a lens element consisting of a glass base upon which plastic is glued, creating the desired aspheric shape. They are typically used in zoom lenses, e.g. the Nikon 28-70mm f/3.5-4.5 AF, first introduced in 1991. Companies like Tamron use hybrid aspherical lenses, likely to reduce the cost of the lenses. Lipton somewhat predicted this use in 1964 [4] when he suggested it would be difficult to grind an aspheric lens in optical glass, yet the manufacture of aspheric lenses in plastic would be no problem. Ironically, many smartphones have lenses which are actually plastic. This is not surprising considering the small size of the lenses required for mobile devices – it is less of a technological challenge, and hence costs less to manufacture (but as manufacturers don’t publish lens diagrams, it’s hard to know). For example the Leica lenses used in Huawei smartphones are plastic. Are there smartphones with glass elements? Sure, but they are usually quite expensive.

Ultimately the inability to derive high precision optics is one of the reasons we don’t see more plastic lenses. But there is another, human factor involved in companies shying away from the use of plastics – the perception of quality. Glass is more associated with quality that plastic, whereas plastic is considered “cheap”, and disposable. This is largely due to its use in inexpensive cameras, and the stigma attached to plastic itself.

- Andrew B. Hecht, “And Now Plastic Lenses”, Popular Photography, 18(5) pp.72-74,128 (1946)

- “Learn from Lenses”, Modern Plastics, 38(7), pp.90-93 (1961)

- Lloyd E. Varden, “Plastic Lenses”, Popular Photography, 49(2) p.48,97,98 (August, 1961)

- Leonard Lipton, “Plastic Lenses: Good Enough!”, Popular Photography, 55(2) p.44-45,100-101 (August, 1964)

- Bob Schwalberg, “Plastic optics vs. glass, and why glass still reigns”, Popular Photography, 70(2) p.52,118 (1972)

- Kingslake, R., Johnson, R.B., “The Work of the Lens Designer”, in Lens Design Fundamentals, 2nd ed. (2010)

- C.B.Estes, Thermally Compensated Plastic Triplet Lens, (July 24, 1961), Eastman Kodak Company, U.S. Patent No. 3,205,774

Further reading:

- Andy Day, “Plastic Lenses Are Awesome so Stop Being Sniffy About Them“, November 2018