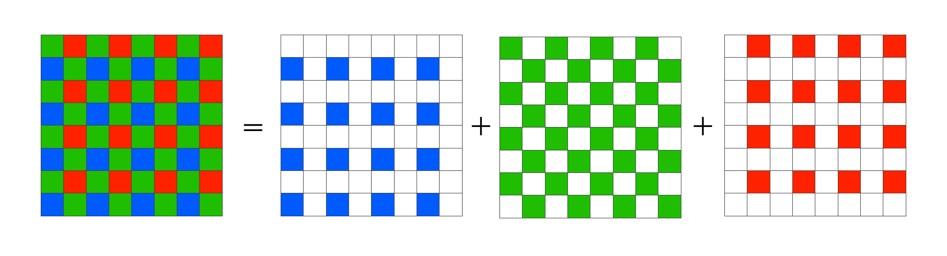

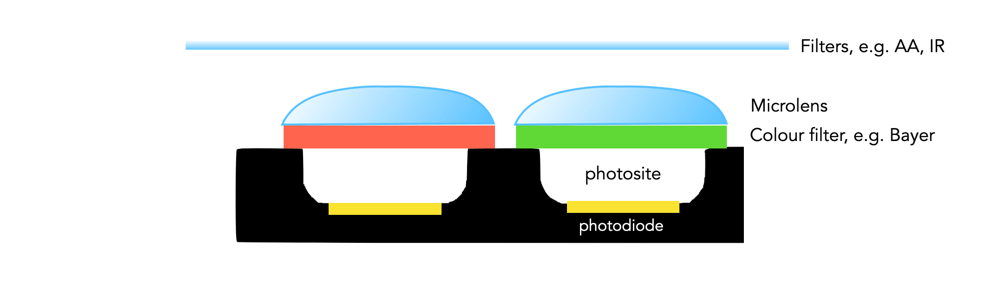

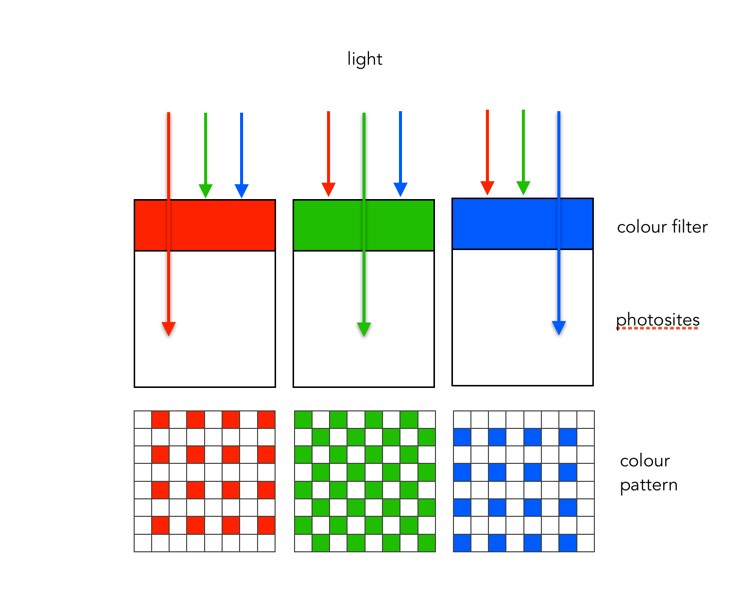

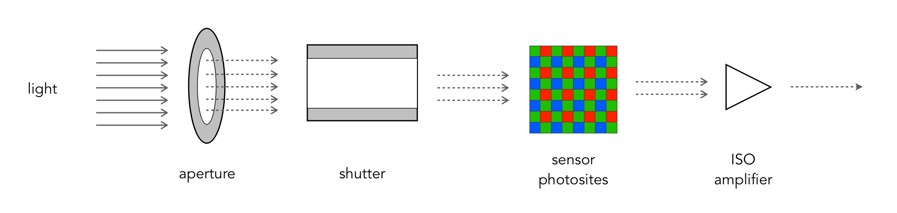

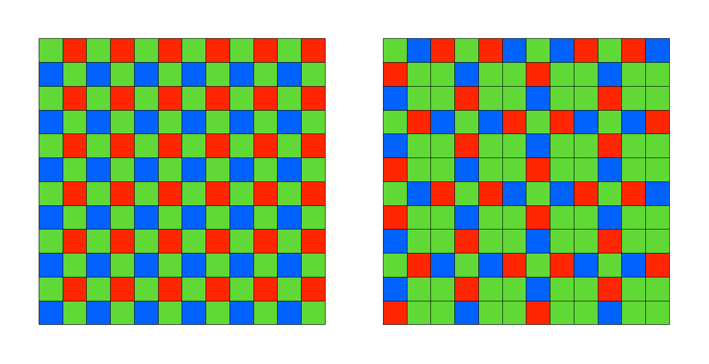

Many digital cameras use the Bayer filter as a means of capturing colour information at the photosite level. Bayer filters have colour filters which repeat in 2×2 pattern. Some companies, like Fuji use a different type of filter, in Fuji’s case the X-Trans filter. The X-Trans filter appeared in 2012 with the debut of the Fuji X-Pro1.

The problem with regularly repeating patterns of coloured pixels is that they can result in moiré patterns when the photograph contains fine details. This is normally avoided by adding an optical low-pass filter in front of the sensor. This has the affect of applying a controlled blur on the image, so sharp edges and abrupt colour changes and tonal transitions won’t cause problems. This process makes the moiré patterns disappear, but at the expense of some image sharpness. In many modern cameras the sensor resolution often outstrips the resolving power of lenses, so the lens itself acts as a low-pass filter, and so the LP filter has been dispensed with.

C-Trans uses a more complex array of colour filters. Rather than the 2×2 RGBG Bayer pattern, the X-Trans colour filter uses a larger 6×6 array, comprised of differing 3×3 patterns. Each pattern has 55% green, 22.5% blue and 22.5% red light sensitive photosite elements. The main reason for this pattern was to eliminate the need for a low-pass filter, because this patterning reduces moiré. This theoretically strikes a balance between the presence of moiré patterns, and image sharpness.

The X-Trans filter provides a for better colour production, boosts sharpness, and reduces colour noise at high ISO. On the other hand, more processing power is needed to process the images. Some people say it even has a more pleasing “film-like” grain.

| Characteristic | X-Trans | Bayer |

|---|---|---|

| Pattern | 6×6 allows for more organic colour reproduction. | 2×2 results in more false-colour artifacts. |

| Moiré | Pattern makes images less susceptible to moiré. | Bayer filters contribute to moiré. |

| Optical filter | No low-pass filer = higher resolution. | Low-pass filter compromises image sharpness. |

| Processing | More complex to process. | Less complex to process. |