Well mostly it has to do with with aesthetics and simplicity, but also authenticity, and nostalgia.

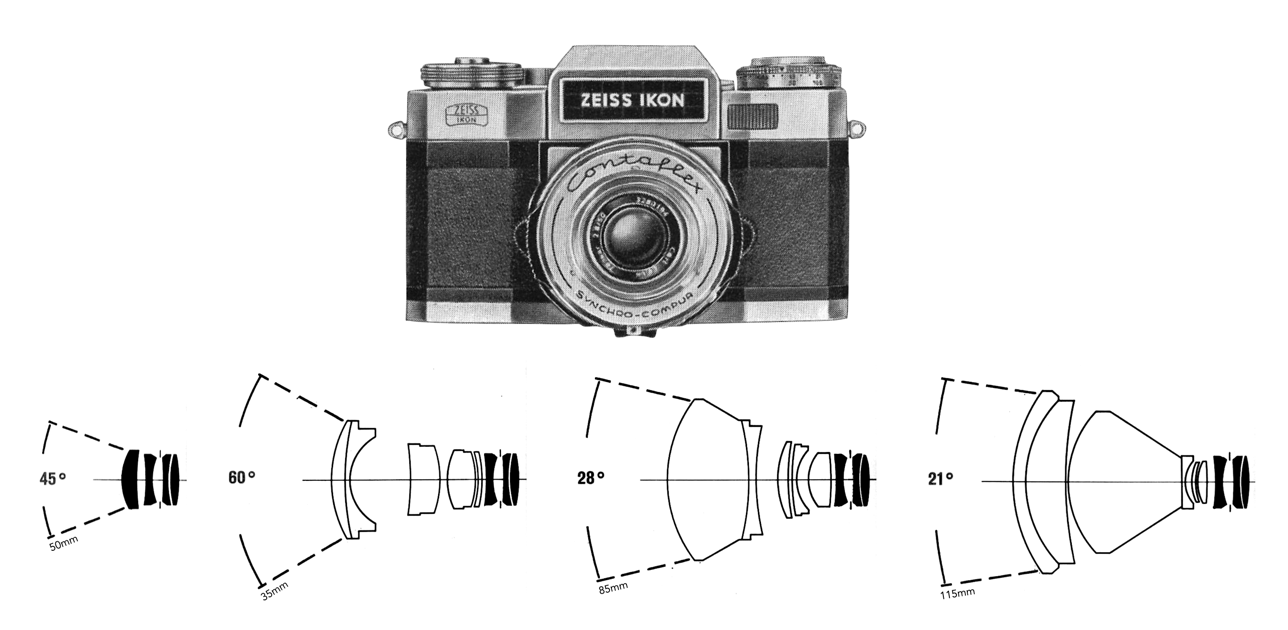

From the dawn of the 35mm SLR in 1936 up until the mid 1960s, most cameras were manufactured using traditional engineering methods, with the materials once associated with precision – brass, steel, aluminum, and an abundance of glass. These cameras were often overwhelmingly heavy, and crammed full of mechanical things. As the 1970s evolved, so too did the revolution in materials and technology that was to seal the fate of the German camera industry, and elevate the Japanese camera industry. There was little room for the hand-crafted camera in the world of the mass-market SLR. In some ways it also lead to an interest in “classic” cameras from the 1950s and 60s. As the 1970s progressed, camera designers used increasing amounts of plastic to build cameras, from camera casings to film-transport gearings. These cameras were also chock full of electronics − electronically timed shutters allowed for increased accuracy and a greater range of shutter speeds, replacing mechanically systems derived from the watch industry. There were also electronic exposure-measurement systems to replace the electro-mechanical galvanometer systems of old. All these new enhancements made cameras lighter, offered more functionality, and probably most significantly reduced their cost.

Especially grievous may be cameras from the mid-1980s to the 1990s. There is a darker side to these purely electronic cameras − they need a power source, and electronics sometimes age poorly. It may be possible, albeit costly, to fix a manual camera but electronic components are almost impossible to fix. Some of these cameras were also left on the shelf with batteries in them, which leak over time, causing further issues. However the changes of the electronic age came at a cost. In the case of cameras, that loss was expressed in a lack of aesthetic appeal, engineering quality, “toughness”, and hand-crafted precision. The industry had swapped the engineer and craftsman for the scientist and technician. The chrome top-plate, so common amongst SLRs had been replaced by plastic. Even lenses would become encased in plastic, maybe as a means of making them lighter and more resilient. That’s not to say the cameras were bad, just that they had lost something in the translation to the modern era.

In many respects these pre-1970s cameras are what some may term “classic” cameras. What this means is somewhat subjective − to some it might mean meticulously engineered, and aesthetically pleasing. To others it might be a somewhat mechanical odd-ball, or a camera that was the first to have some sort of new camera feature or innovation. Sometimes though it is pure nostalgia − the ability to take pictures with a classic camera, made by a classic camera company before many went the way of the dodo. Vintage cameras help capture the true aesthetic of film, before things became too automated. Of course the camera itself is only part of the story, a vessel per se, because the real character comes from the character infused in individual lenses of the period, and the distinct characteristics of the film being used (grain, colour rendition, etc.).

Some people long for an analog past, for cameras where you can experience the craftsmanship that went into building these cameras. They may not be perfect anymore, but many have stood the test of time much better than their contemporary brethren.