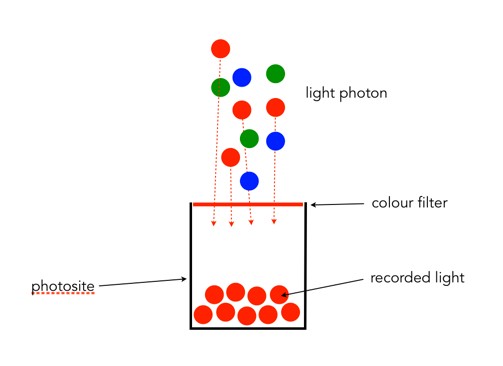

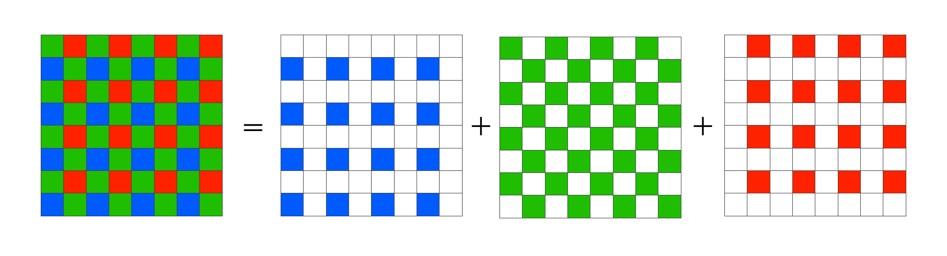

Without the colour filters in a camera sensor, the images acquired would be monochromatic. The most common colour filter used by many camera is the Bayer filter array. The pattern was introduced by Bryce Bayer of Eastman Kodak Company in a 1975 patent (No.3,971,065). The raw output of the Bayer array is called a Bayer pattern image. The most common arrangement of colour filters in Bayer uses a mosaic of the RGBG quartet, where every 2×2 pixel square is composed of a Red and Green pixel on the top row, and a Green and Blue pixel on the bottom row. This means that not every pixel is sampled as Red-Green-Blue, but rather one colour for each photosite. The image below shows how the Bayer mosaic is decomposed.

But why are there more green filters? This is largely because human vision is more sensitive to colour green, so the ratio is 50% green, 25% red and 25% blue. So in a sensor with 4000×6000 pixels, 12,000 would be green, and red and blur would have 6,000 each. The green channels are used to gather luminance information. The Red and Blue channels each have half the sampling resolution of the luminance detail captured by the green channel. However human vision is much more sensitive to luminance resolution than it is to colour information so this is usually not an issue. An example of what a “raw” Bayer pattern image would look like is shown below.

So how do we get pixels that are full RGB? To obtain a full-color image, a demosaicing algorithm has to be applied to interpolate a set of red, green, and blue values for each pixel. These algorithms make use of the surrounding pixels of the corresponding colors to estimate the values for a particular pixel. The simplest form of algorithm averages the surrounding pixels to derive the missing data. The exact algorithm used depends on the camera manufacturer.

Of course Bayer is not the only filter pattern. Fuji created its own version, the X-Trans colour filter array which uses a larger 6×6 pattern of red, green, and blue.