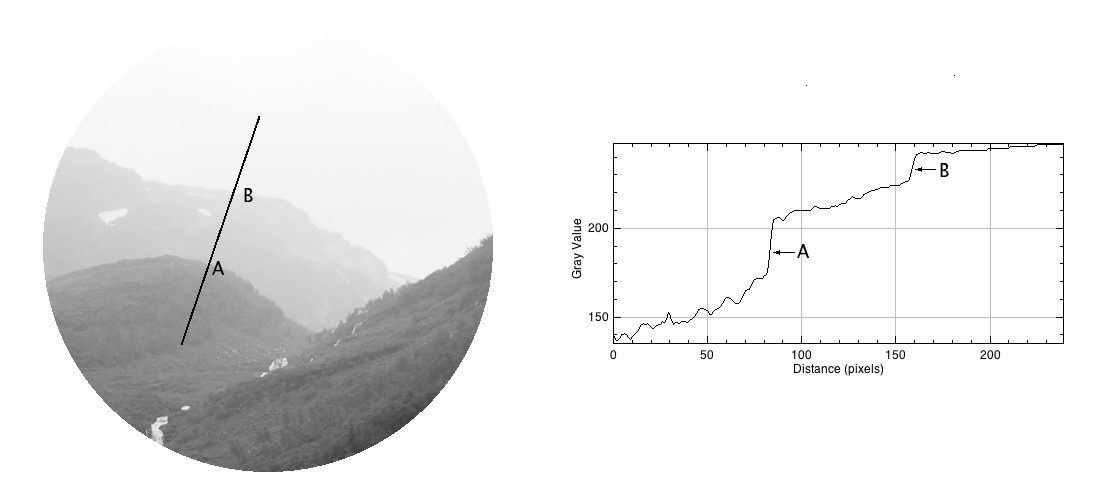

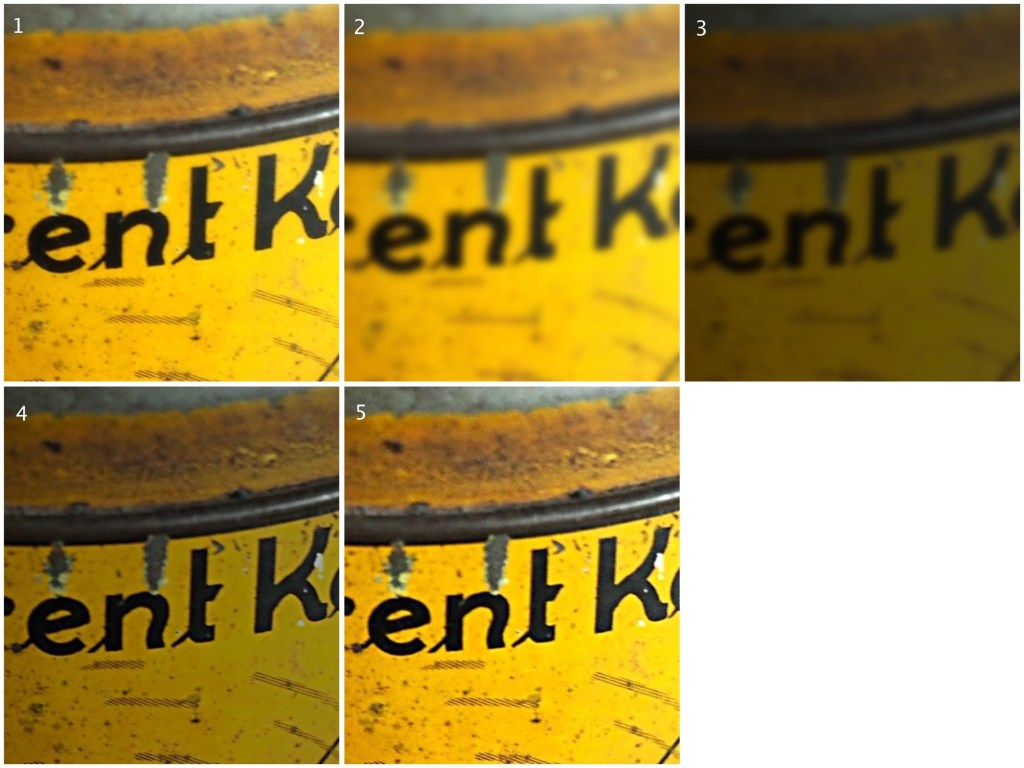

Some photographs contain blur which is very challenging to remove. Large scale blur, which is the result of motion, or defocus can’t really be suppressed in any meaningful manner. What can usually be achieved by means of image sharpening algorithms is that finer structures in an image can be made to look more crisp. Take for example the coffee can image shown below, in which the upper lettering on the label in almost in focus, while the lower lettering has the softer appearance associated with de-focus.

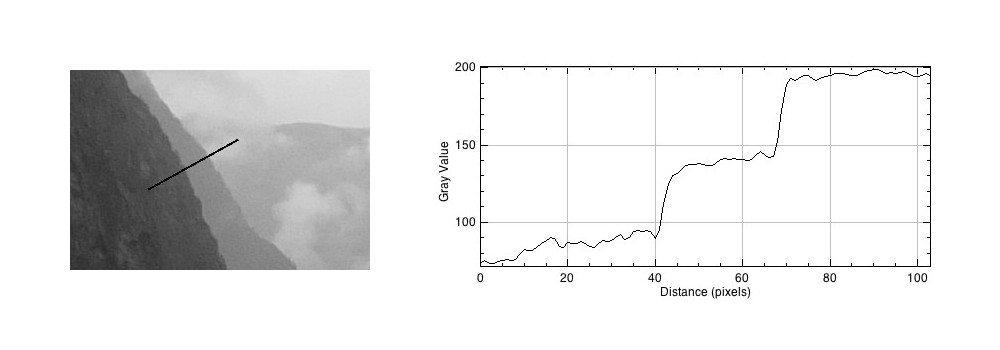

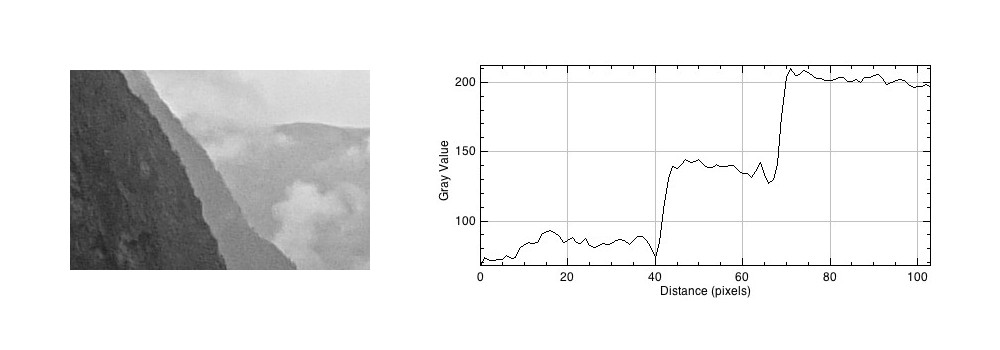

The problem with this image is partially the fact that the blur is not uniform. Below are two regions enlarged:containing text from opposite ends of the blur spectrum.

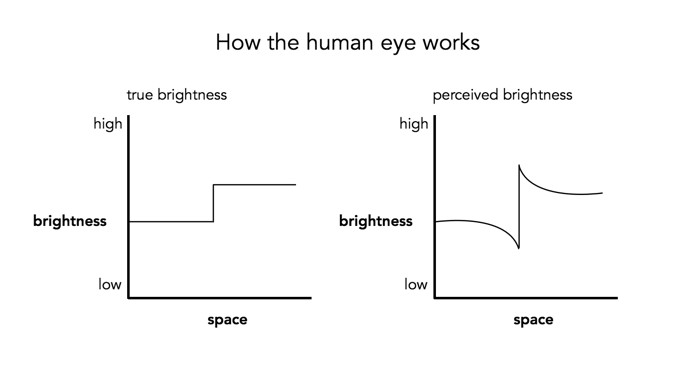

Reducing blur, involves a concept known as image sharpening(which is different from removing motion blur, a much more challenging task). The easiest technique for image sharpening, and the one most often found in software such as Photoshop is known as unsharp masking. It is derived from analog photography, and basically works by subtracting a blurry version of the original image from the original image. It is by no means perfect, and is problematic in images where there is noise, as it tends to accentuate the noise, but it is simple.

Here I am using the “Unsharp Mask” filter from ImageJ. It subtracts a blurred copy of the image and rescales the image to obtain the same contrast of low frequency structures as in the input image. It works in the following manner:

- Obtain a Gaussian blurred image, by specifying a blur radius (in the example below the radius = 5).

- Filter the blurred image using a “Mask Weight, which determines the strength of filtering. A value from 0.1-0.9. (In the example below, the mask weight =0.4)

- Subtract the filtering image from the original image.

- Divide the resulting image by (1.0-mask weight) – 0.6 in the case of the example.

If we compare the resulting images, using an enlarged region, we find the unsharp masking filter has slightly improved the sharpness of the text in the image, but this may also be attributed to the slight enhancement in contrast. This part of the original image has less blur though, so let’s apply the filter to the second image.

Below is the result on the second portion of the image. There is next to no improvement in the sharpness of the image. So while it may be possible to slightly improve sharpness, where the picture is not badly blurred, excessive blur is impossible to “remove”. Improvements in acuity may be more to the slight contrast adjustments and how they are perceived by the eye.