It is a camera without a mirror of course!

Next you’ll ask why a camera would ever need a mirror.

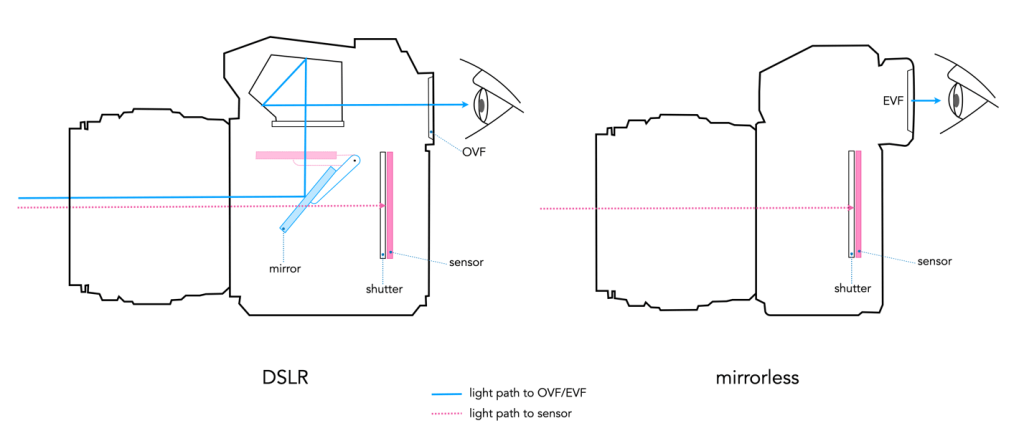

Over the last few years we have seen an increased use of the term “mirrorless” to describe cameras. But what does that mean? Well, 35mm SLR (Single Lens Reflex) film cameras all contained a reflex mirror. The mirror basically redirects the light (i.e. view) coming through the lens to the film by means of a pentaprism, to the optical viewfinder (OVF) – which is then viewed by the photographer. Without it, the photographer would have to view the scene by means of an offset window (like in a rangefinder camera, which were technically mirrorless). This basically means that the photographer sees what the lens sees. When the photographer presses the shutter-release button, the mirror swings out of the way, temporarily blocking the light from passing through the viewfinder, and instead allowing the light to pass through the opened shutter onto the film. This is depicted visually in Figure 1.

When DSLR (Digital Single Lens Reflex) cameras appeared they used similar technology. The problem is that this mirror, together with the digital electronics, meant that the cameras became larger than traditional film SLRs. The concept of mirrorless cameras appeared in 2008, with the introduction of the Micro-Four-Thirds system. The first mirrorless interchangeable lens camera was the Panasonic Lumix DMC-G1. It replaced the optical path of the OVF with an electronic viewfinder (EVF), making it possible to remove the mirror completely, hence reducing the size of cameras. The EVF shows the image that the sensor outputs, displaying the output on a small LCD or OLED screen.

As a result of nixing the mirror, mirrorless cameras are typically have fewer moving parts, and are slimmer than DSLRs, shortening the distance between the lens and the sensor. The loss of the mirror also means that it is easier to adapt vintage lenses for use on digital cameras. Some people still prefer using an OVF, because it is optical, and does not require as much battery-life as an EVF.

These days the only cameras still containing mirrors are usually full-frame DSLRs, and they are slowly disappearing, replaced by mirrorless cameras. Basically all recent crop-sensor cameras are mirrorless. DSLR sales continue to decline. Looking only at interchangeable lens cameras (ILC), according to CIPA, mirrorless cameras in 2022 made up 68.7% of all ILD units (4.07M versus 1.85M), and 85.8% of shipped value (out of 5.927 million units shipped).