If you are starting to learn about image processing then you will likely be dealing with grayscale or 8-bit images. This effectively means that they contain 2^8 or 256 different shades of gray, from 0 (black), to 255 (white). They are the simplest form of image to create image processing algorithms for. There are some image types that are more than 8-bit, e.g. 10-bit (1024 shades of grey), but in reality these are only used in specialist applications. Why? Doesn’t more shades of grey mean a better image? Not necessarily.

The main reason? Blame the human visual system. It is designed for colour, having three cone photoreceptors for conveying colour information that allows humans to perceive approximately 10 million unique colours. It has been suggested that from the perspective of grays, human eyes cannot perceptually see the difference between 32 and 256 graylevel intensities (there is only one photoreceptor with deals with black and white). So 256 levels of gray are really for the benefit of the machine, and although the machine would be just as happy processing 1024, it is likely not needed.

Here is an example. Consider the following photo of the London Blitz, WW2 (New Times Paris Bureau Collection).

This is a nice grayscale image, because it has a good distribution of intensity values from 0 to 255 (which is not always easy to find). Here is the histogram:

![]()

Now consider the image, reduced to 8, 16, 32, 64, and 128 intensity levels. Here is a montage of the results, shown in the form of a region extracted form he original image.

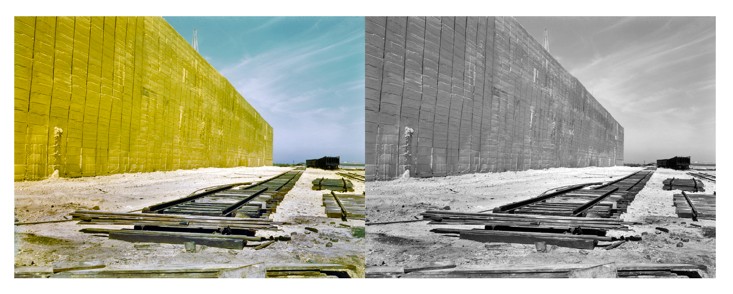

Not that there is very little perceivable difference, except at 8 intensity levels, where the image starts to become somewhat grainy. Now consider a companion of this enlarged region showing only 256 (left) versus 32 (right) intensity levels.

Can you see the difference? There is very little difference, especially when viewed in the over context of the complete image.

Many historic images look like they are grayscale, but in fact they are anything but. They may be slightly yellowish or brown in colour, either due to the photographic process, or due to aging of the photographic medium. There is no benefit to processing these type of photographs as colour images however, they should be converted to 8-bit.