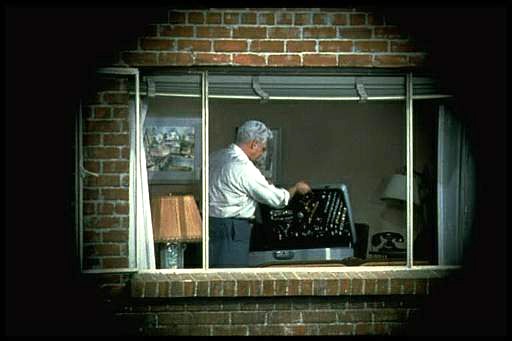

Last week I watched Rear Window, an Alfred Hitchcock directed thriller from 1954 starring James Stewart and Grace Kelly. The story follows photojournalist, L.B. “Jeff” Jefferies, who breaks his leg while shooting an action shot at a car race (supposedly working for LIFE Magazine). Confined to a wheelchair in his New York apartment, he spends time watching the occupants of neighbouring apartments through his apartments rear window, as they go about their daily lives. He begins to suspect that a man across the courtyard may have murdered his wife. Jeff enlists the help of his high society fashion-consultant girlfriend Lisa Freemont and his visiting nurse Stella to investigate. It’s a great movie from a period when life was likely a little simpler than it is now.

For the early part of the movie, Jeff is just looking out the window, bored with being confined to his apartment while his cast covered leg recovers. When he deduces something is amiss across the courtyard, he pulls out his camera, with its telephoto lens to view the scene a little closer. The courtyard was supposedly 98′ wide and 185′ in length.

The 35mm film camera used by Jeff is an Exakta VX Ihagee Dresden, with the Exakta logo covered by a piece of black material in the movie. Why choose the Exakta? In the time the film was shot, there were really only three 35mm camera systems with global recognition: Leica, Contax, and Exakta. Hitchcock could have used a Leica with a reflex housing for the telephoto lens (e.g. Visoflex II), but a solution with a one-eyed reflex with a prism viewfinder was more elegant. Why was the brand covered with black tape? To cover up its East German / Communist origins? This may have played a role, but more likely just an avoidance of advertising in film.

The Exakta is an interesting choice of camera for the period, made by Ihagee Kamerawerk Steenbergen & Co, Dresden, in former East Germany and was produced between 1951-56. The Exakta is notable as being the first ever Single Lens Reflex (SLR) camera for both 127 roll film (1933), and 135 format 35mm film (1936). It’s not surprising that Jeff was using a Exakta, as before Japanese started to dominate the camera market the Exakta dominated the market, capturing perhaps 95% of SLR sales (they did kind of invent the SLR in 1936). The lens being used on the camera is a Kilfitt Fern-Kilar f/5.6 400mm telephoto lens.

There are a number of things that are of interest with the use of the camera. I know this is a movie, and the camera was used as a prop, but here goes. Firstly, as a press photographer, it is unlikely he would have used a 400mm lens. Jeff’s character was supposedly based on war photographer Robert Capa, used a Contax II with a 50mm lens. (Ironically Capa was killed covering the First Indochina War in 1954, which is where Jeff’s editor wanted to send him). A 400mm lens would be more useful for a sports photographer shooting field based sports like football (soccer) or a bird watcher. The lens Jefferies uses to take the photograph on the racetrack is clearly a wide-angle (and frankly taken from a very dangerous viewpoint).

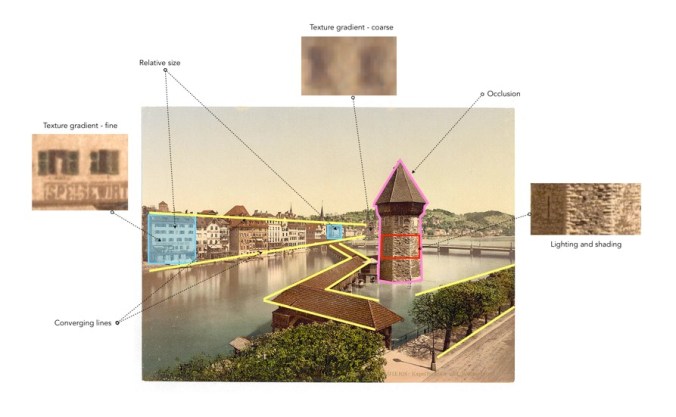

Next there is the issue of the view through the lens itself, which it seems is solely for cinematic effect. I know from a cinematography point-of-view, Hitchcock was trying to imply that the view was through a camera, showing a circular view, but camera views are rectangular. Next there is the issue of the “focal length” of the lens, which seems to be quite flexible. There are two scenes (shown below) taken seconds apart in Thorwald’s apartment, and viewed through the Kilfitt Fern-Kilar 400mm lens. One shows a close-up of Lisa’s hand behind her back (showing where she has slipped on the victim’s wedding ring). This would mean that the 400mm lens had the ability to zoom, which was not possible (and likely act like a 800-1200mm lens). There is also the issue of light intensity, which doesn’t seem to change, even though it is nighttime. The wonders of artistic license.

The field-of-view for the 400mm lens is about right for most shots, at 8-9 feet horizontally, and 5-6 feet vertically. At times it looks as though Jeff is taking photos, however the shutter release button is on the photographers left side of the camera, so from this we know he did not take any photographs. In addition, Jeff never actually cocks the shutter, which is a requirement for looking through the viewfinder – the mirror stays up after exposure, so viewfinder is dark, cocking the shutter returns the mirror to normal position (and transports the film to the next exposure).

Which leads us to the issue of photographs. why would a photojournalist, who takes photographs for a living, not take any photographs of things happening across the courtyard? If he would have taken some photographs, then he would of at least had pictures of suspicious behaviour to show his friend Det. Lt. Doyle. But not once did we hear Jeffries depress the shutter button (and you would hear it because it is noisy). He may have taken photographs at other times, but not during the setting in the movie.

P.S. The lens was manufactured by Heinz Kilfitt Optische Fabrik (1946-64) from Munich (West Germany). Kilfitt was an innovative lens maker, producing the world’s first 35mm macro lens, the Kilfitt 4 cm f/3.5 Makro-Kilar in 1955.

Further reading:

Tracking Down and Testing the Camera from ‘Rear Window’ (1954), Thomas Bloomfield (PetaPixel, 2024)